Hi Frank,

first of all, unless you are running the application on a raspberry pi, ~60 users is no load to be concerned of on a reasonable machine, we have servers with several thousand active users (clicking in a time window of 2 minutes). ... but users are not users, when these user fire e.g every second a request taking a minute, every server will run out of resources soon. So, in order to assess the situation of this server properly, more details are needed.

Concerning the configuration parameters:

- maxconnections: having maxthreads higher than maxconnections never makes sense, NaviServer should warn you about this on startup

- maxthreads: 128 is quite a large value! On the setup with 2000 concurrent users, we have in the default pool minthreads and maxthreads set to 25. Make sure to have enough db connections configured in your database setup. How many cores are available in this setup?

- connsperthead: unless you have major data-leaks in the application, increase connsperthread to 10000; this is not relevant for the issue.

Common reasons for bad performance are:

- database: You might run out of db-connections (threads will be hanging around idle waiting for db-connections) or simply to slow or to many queries.

- slow clients: when NaviServer is not properly configured, the speed of client connections for uploads and downloads might cause long blocking connection threads. This will not happen, when spooler and writer threads are properly configured.

- setup without multiple thread pools: In case, a site admin knows to have several very slow requests (taking each multiple seconds) the recommended setup is to have multiple thread pools, such that the slow requests are directed to special pools, but no other users are harmed.

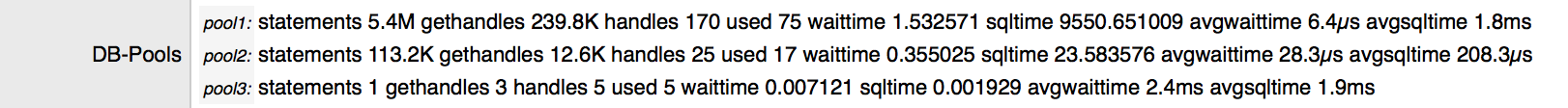

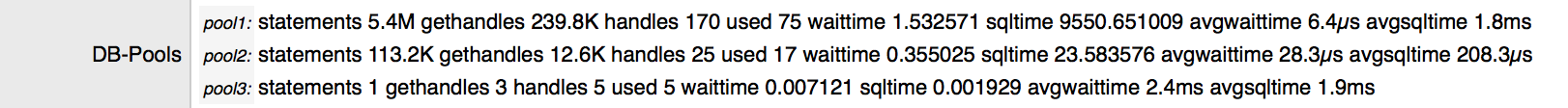

How to check a site's characteristics? An easy way to get an overview is nstats module of NaviServer. One get from this module on the "Server" page among many other this the db-characteristics from the db-pools, like e.g.:

The avgwaittime is the average time for obtaining a db-handle, and avgsqltime is the average time of an SQL query.

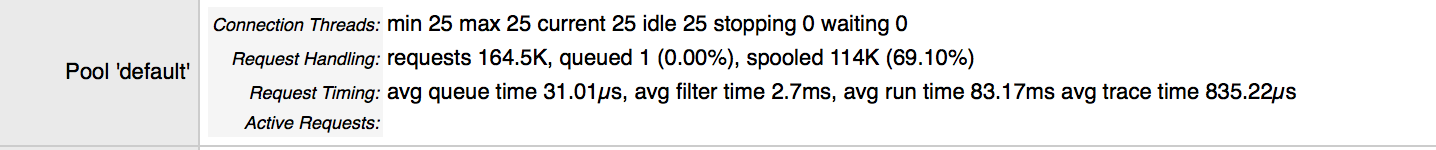

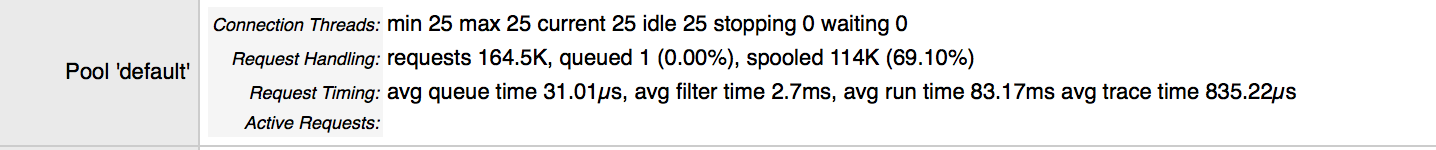

The same page provides as well information about the configured pools, like e.g. from the default connection thread pool:

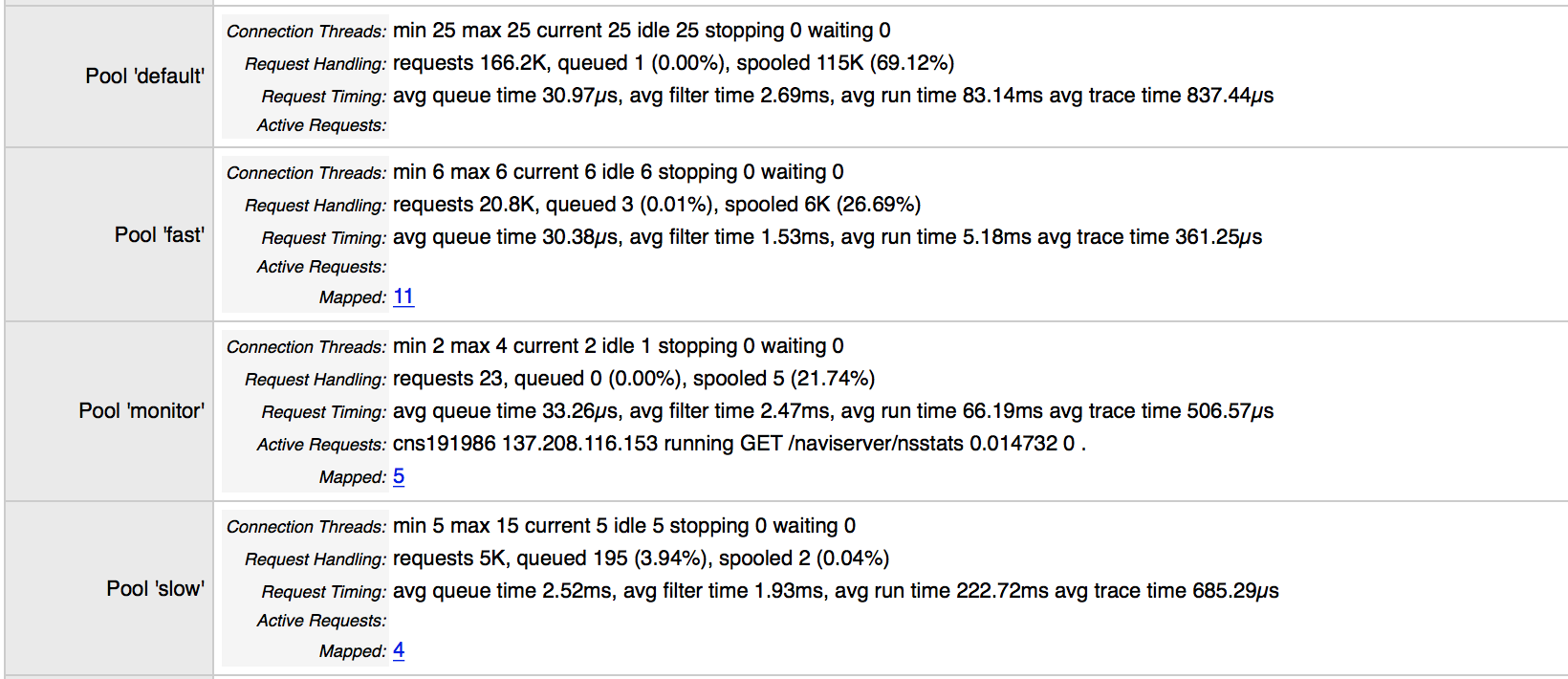

When the queue time goes up, you should become alert. A high filter time is an indicator of permission problems, the avg runtime gives hints about the need of splitting connection pools.

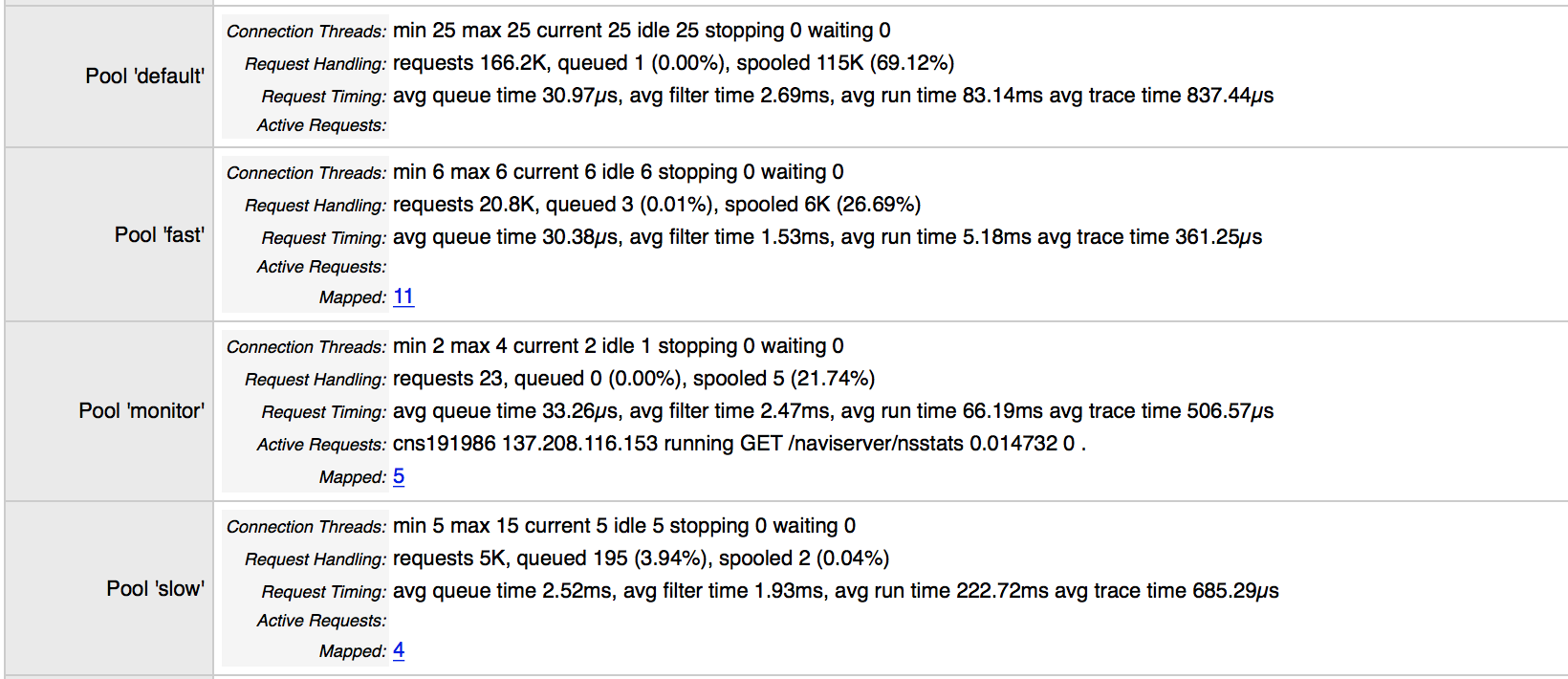

Below, you see information about multiple connection pools, where e.g. the "monitor" pools is used on this site for monitoring applications (like munin) and for admins to adjust the setup at runtime when necessary (ds/shell, etc.)

Hope this helps!